Automated DB backups of Bahmni

Gurpreet Luthra

Preethi

Rahul De

Sravanthi N. S. CH.

Geographically Separate Locations

If you are considering a backup strategy for Production Data, do consider storing the backup offsite. A geographically different location ensures that events like flooding or fire, don't destroy all the backups too!

Please read this document on how to perform an encrypted backup on a USB.

- This section of Bahmni backups is for Legacy version of Bahmni that runs on CentOS (prior to Bahmni Lite v1.0). These commands "bahmni -i <action>" are not available on Docker/Kubernetes version of Bahmni. For Docker version of Bahmni, see Docker documentation: Performing a Backup / Restore of Container Data & Files (docker).

- A training was conducted in Dec-2023, on how to perform a backup of all data from Bahmni on Centos V0.92/0.93. That will be very useful too. Please see the videos in the training guide here: Migrating Bahmni from CentOS to Docker (Training)

Please read this document on overall Bahmni BACKUP/RESTORE from V0.88 onwards of Bahmni: Backup/Restore commands

DB Backup using bahmni command line tool (available from v0.81 onwards)

'bahmni' command line tool will be available in the machine where we install the 'bahmni-installer' rpm -Install Bahmni on CentOS#Step2:PerformthefollowingstepstoinstalltheRPMs

To take db-backup, following command has to be executed:

bahmni -i local db-backup

If custom inventory file is setup, following command has to be executed:

bahmni -i <inventory_file_name> db-backup

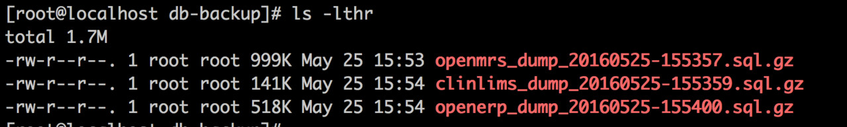

The command will take the backup of each database into a seperate file in the machine where the databases are present at file location /db-backup as below:

Optionally, it would also allow you to copy the backup to the machine from where the command is executed by shooting the following question:

Do you want to copy db backup to local machine in /db-backup directory? y/N: y

This would be useful in multi-machine setup where databases are present at different location. When all databases are present in the same machine from which command is executed, 'N' can be given as the answer to the above question.

DB Restore using bahmni command line tool (available from v0.81 onwards)

To restore the database, `db-restore` command from bahmni command line tool can be used. The command can restore only one database at a time and it will drop the database if already present and then restore the specified dump file.

bahmni -db <database_name> -path <zipped_sql_file_path> db-restore # If custom inventory file is present bahmni -i <inventory_file_name> -db <database_name> -path <zipped_sql_file_path> db-restore #Examples: bahmni -i jss -db openmrs -path /db-backup/openmrs_dump_20160525-155357.sql.gz db-restore bahmni -i jss -db clinlims path /db-backup/clinlims_dump_20160525-155359.sql.gz db-restore

Automated db-backup

Download the backup script

wget https://gist.githubusercontent.com/sravanthi17/27e239bfe1e0773d982f7b715fa582b3/raw/178ccc164693d9c134dd3324387ce38b3dd7783b/db-backup.sh {path_to_save_script}

replace {path_to_save_script} with the proper value. This is where the script will be downloaded to.

Create a crontab

If you want to setup an automated schedule to backup, then you can create a crontab entry to trigger this command periodically. For example:

# Edit the crontab file of root user

crontab -u root -e

# Make entry as (for running twice a day at 2PM, and 10PM)

00 14,22 * * * sudo {path_to_save_script}/backup.sh

00 14,22 * * * sudo {path_to_save_script}/backup.sh

# eg. 00 14,22 * * * sudo {path_to_save_script}/backup.sh

# eg. 00 14,22 * * * sudo {path_to_save_script}/backup.sh

crontab may fail for sudo

cron may fail silently for above command on some installations, since we use the sudo command in our scripts. To enable sudo commands to run in cron, you need to disable requiretty. Run visudo command, and comment out the line: Defaults requiretty

For more details on this read: http://unix.stackexchange.com/questions/49077/why-does-cron-silently-fail-to-run-sudo-stuff-in-my-script

# Add this entry in crontab to delete files older than 15 days from /db-backup folder (every evening at 6 PM)

00 18 * * * /usr/bin/find /db-backup -type f -mtime +15 -exec /bin/rm -f {} \;

For more examples on crontab entries read this: crontab

The Bahmni documentation is licensed under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0)